Effective Bird Sound Classification

Classifying bird sounds from audio doesn’t need a fancy pipeline. Mel spectrograms (a 2D “picture” of the sound, tuned to how we hear) plus a small image-style network like EfficientNet get you most of the way, and you can run it on a single GPU or even CPU. Here’s how the pieces fit together.

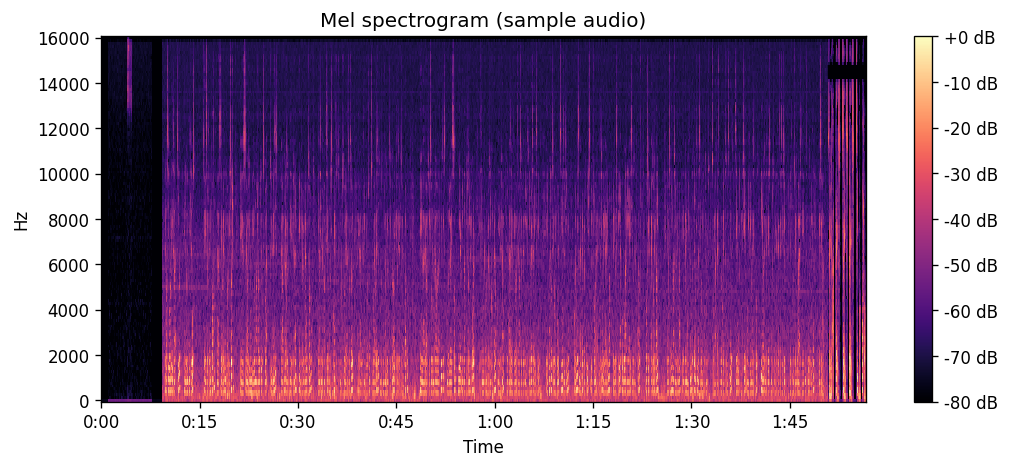

Why mel spectrograms?

Raw audio is a long waveform. To use it with an image model, we need a 2D “picture” of the sound. A spectrogram does that: one axis is time, the other is frequency (pitch), and the brightness at each point is how loud the sound is at that time and pitch. You get it by chopping the audio into short windows and measuring the energy in different frequency bands in each window.

A mel spectrogram is the same idea, but the frequency axis is warped to the mel scale—it’s tuned to how we hear. Equal steps in mel feel like equal steps in pitch to our ears. For bird calls, which sit in the mid–high range, that representation often helps the model learn better than a raw spectrogram. The pipeline is: waveform → frequency analysis (STFT) → mel filter bank → optional log scale. Out comes a 2D image you can feed into a convolutional network.

Using EfficientNet as the backbone

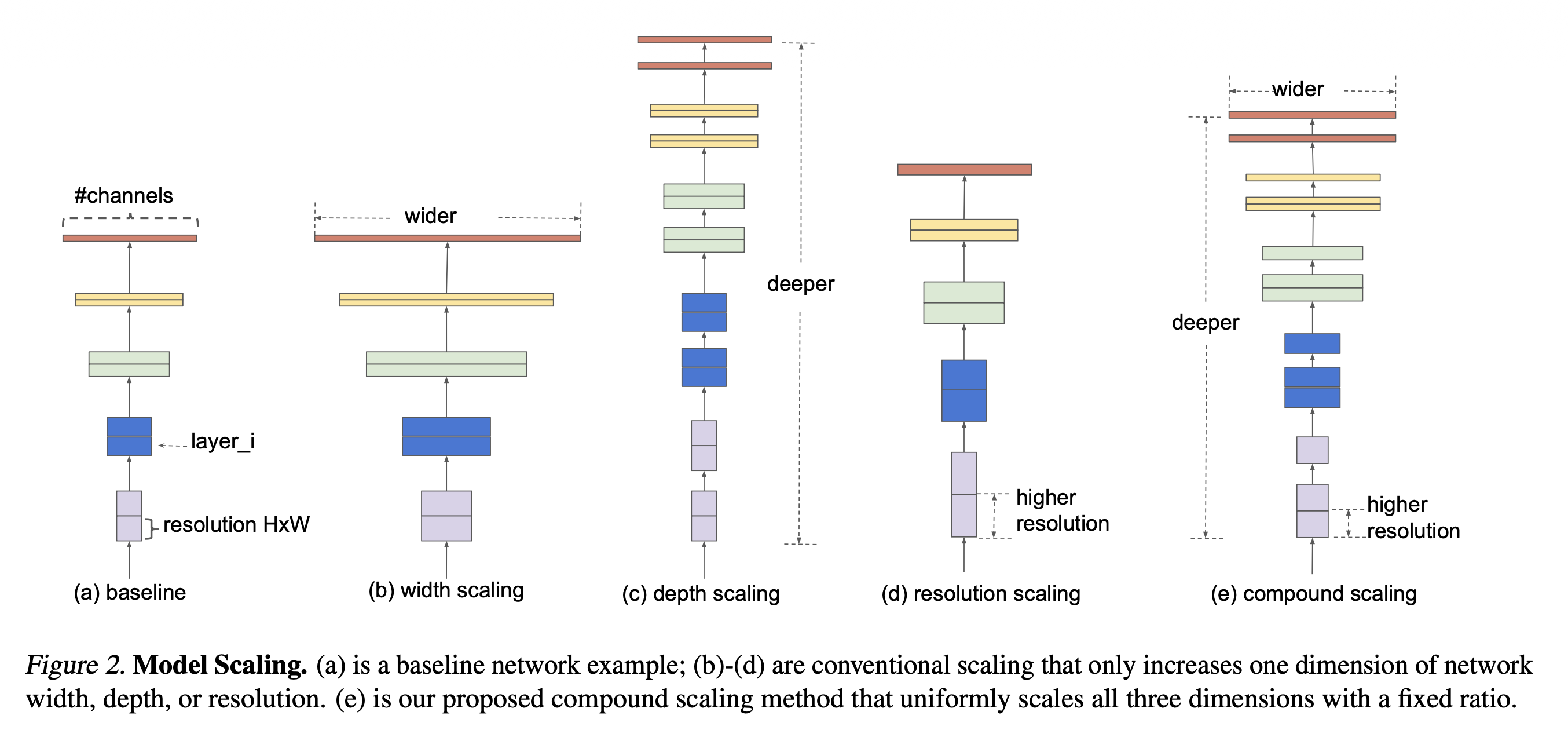

EfficientNet is a family of convolutional networks that scale in a balanced way (deeper, wider, and higher resolution together). I used a small variant (B0 or B3) so training and inference could run on a single GPU or even CPU. Input: one mel spectrogram per clip (like a single-channel image). Output: probabilities per species, and I treated it as multi-label because a clip can contain more than one bird. That setup gave a good trade-off between accuracy and speed; if you have more data and compute, you can step up to a larger variant.

References

-

EfficientNet — Tan, M., & Le, Q. V. (2019). EfficientNet: Rethinking model scaling for convolutional neural networks. ICML. arXiv:1905.11946.

-

EfficientNetV2 — Tan, M., & Le, Q. V. (2021). EfficientNetV2: Smaller models and faster training. ICML. arXiv:2104.00298.

-

MobileNetV2 — Sandler, M., Howard, A., Zhu, M., Zhmoginov, A., & Chen, L.-C. (2018). MobileNetV2: Inverted residuals and linear bottlenecks. CVPR. arXiv:1801.04381.

Other posts

- Can we really get alpha from market data?

Efficient Market Hypothesis, Micro Alphas, and why probabilistic forecasting matters for turning signals into positions.

- What works for forecasting macro economic series with deep learning?

Data quirks of macro series, which model families work (and which don’t), and why it’s rarely one-size-fits-all.

- Could multivariate time series have their own representations?

Identifiable innovations, diagonal dynamics, and iVDFM: factor recovery, interventions, and probabilistic forecasting.

- Can we make a more risk-aware portfolio agent from utility theory?

Recursive (Epstein–Zin) utility with Monte Carlo certainty equivalents in PPO/A2C, on Korean ETF splits.

- Creating and Evaluating Synthetic Tabular Data

Sequential synthesis for tabular data, plus three checks: propensity scores, CI overlap, and quasi-identifier risk.